- Day 1: Setting Up Your Study OS (Current)

- Day 2: The Research Revolution

- Day 3: Mastering NotebookLM

- Day 4: Field-Specific Power Moves

- Day 5: Writing with Integrity

- Day 6: Career Jumpstart

- Day 7: Personal AI Agents

There is a big difference between using AI and building a way of thinking with AI.

Right now, too many students meet tools like ChatGPT, Gemini, or Claude at the most fragile point in the learning process: when they are tired, behind schedule, confused, and a little desperate. That is exactly when the temptation appears. “Just give me the answer.” “Write the paragraph.” “Solve the problem.” “Summarize the chapter so I can survive tomorrow’s test.”

It feels efficient. Sometimes it even feels smart.

But a shortcut taken too early is not efficiency. It is often borrowed confidence.

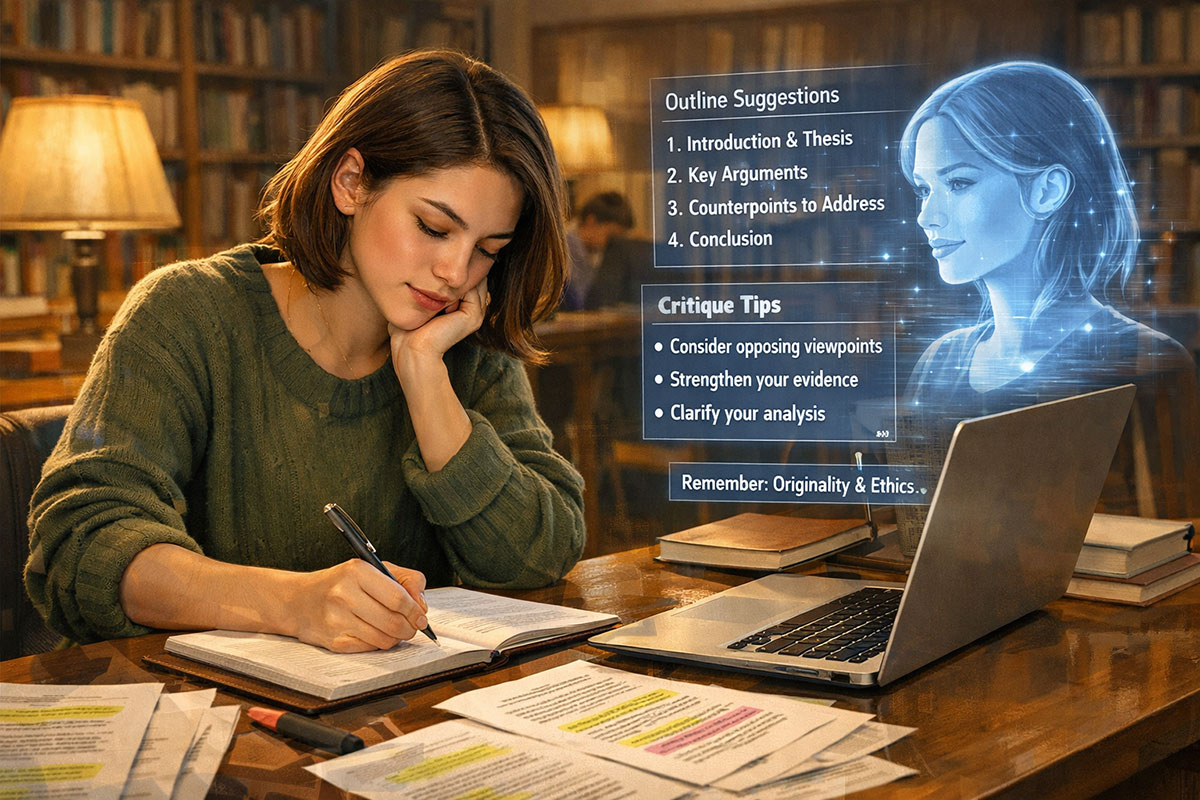

The real opportunity of this moment is not that AI can produce polished text in seconds. It is that AI can become a patient tutor, a questioning partner, a practice examiner, a thinking mirror, and a personalized study coach that does not get tired of your confusion. Used badly, it can flatten curiosity. Used well, it can sharpen it.

The students who win in the AI era will not be the ones who ask for the fastest answer. They will be the ones who design the best learning system.

That is where the idea of a Study OS matters. Not an app. Not a productivity buzzword. A system. A repeatable setup that tells your AI how to behave before you start studying, so the tool helps you think instead of helping you bypass thinking.

This first article in the series is about one foundational shift: moving from “AI as a cheating tool” to “AI as a Socratic tutor.” That shift is ethical, practical, and increasingly necessary as schools and universities rethink what learning looks like in an AI-rich world. TrendFlash has already explored both the ethics of responsible AI use and the broader tension inside modern education, where institutions are trying to balance AI adoption with real cognitive growth.

Why Most Students Use AI Wrong

Let’s start with the uncomfortable truth. Most students do not fail with AI because the tools are weak. They fail because their default relationship with the tool is passive.

They open a chat box the way previous generations opened a search bar: with urgency, not intention. The goal is immediate relief. A completed answer. A cleaner paragraph. A solved equation. A shortcut around the friction that real learning creates.

But friction is not always the enemy.

That headache you feel when you are wrestling with an idea? That is often the mental work that builds durable understanding. Remove all of it, and you may submit something polished while learning almost nothing. That is one reason the debate around AI in education has become so intense. As universities expand AI integration, the core concern is no longer whether students will use AI. It is whether they will use it in ways that strengthen or weaken reasoning.

This is also where ethics stops being abstract. The cheating question is not only about breaking rules. It is about whether you are outsourcing your mind at the exact moment it is supposed to grow. The broader responsible-AI conversation increasingly comes back to issues like accountability, transparency, and the consequences of using powerful systems without clear guardrails. TrendFlash’s AI ethics coverage frames that challenge in accessible terms: the question is not simply “Can AI do this?” but “What happens when humans stop thinking carefully about how it should be used?”

Students also use AI badly because they treat prompting as a request for output rather than a way to shape behavior. That is a subtle distinction, but it changes everything. If your prompt says, “Solve this,” you get an answer machine. If your prompt says, “Act as a tutor who asks one question at a time and does not reveal the final answer until I attempt it,” you get something closer to guided learning.

That is why this series starts here. Before research workflows. Before NotebookLM. Before field-specific hacks. Before AI career strategy. You need a learning posture first.

AI does not automatically make a student smarter. It often amplifies the learning habits already in place.

If your habit is avoidance, AI can automate avoidance. If your habit is inquiry, AI can accelerate inquiry.

What a Study OS Actually Is

So what is a Study OS in practical terms?

It is the set of rules, prompts, checkpoints, and habits that govern how you use AI during study sessions. Think of it as the difference between wandering into a library and having a study plan waiting on the table. One is reactive. The other is designed.

A strong Study OS usually has four layers.

First, the role layer. You tell the AI who it is for this session. Not a generic assistant. A tutor. A debate partner. A quizmaster. A concept explainer for your grade level. A writing coach that refuses to draft your final paragraph.

Second, the behavior layer. You specify how it should respond. Ask questions first. Reveal one step at a time. Use simple language. Challenge weak reasoning. Offer hints before solutions. Ask me to summarize what I learned before moving on.

Third, the boundaries layer. You decide what the AI must not do. Do not write my final assignment. Do not give me the complete answer until I attempt it. Do not invent sources. Tell me when you are unsure. Flag possible mistakes or hallucinations.

Fourth, the reflection layer. At the end of the session, the AI should help you consolidate. What did I misunderstand? What are the top three ideas to remember? What should I review tomorrow? What question would a teacher ask to test whether I really understood this?

This is not theoretical. It is very close to the workflow logic behind the most effective student prompt systems already gaining traction, where the best results come from prompting AI to guide process rather than just deliver product. That same principle shows up in TrendFlash’s student workflow piece about using tools to study faster without cheating.

Here is a simple comparison:

| Approach | What the Student Asks | What Usually Happens | Long-Term Effect |

|---|---|---|---|

| Answer Machine | “Solve this for me.” | Fast output, low struggle | Weak retention, false confidence |

| Paraphrase Machine | “Rewrite this so it sounds better.” | Cleaner language, limited understanding | Improved submission, limited skill growth |

| Socratic Tutor | “Ask me questions and guide me step by step.” | Slower progress, deeper engagement | Better reasoning, better memory, stronger independence |

The third approach can feel frustrating at first because it is less magical. It does not instantly rescue you. It asks something from you. That is exactly why it works.

How to Write a Socratic Tutor System Prompt

Now for the part that changes behavior fast: the actual system prompt.

A system prompt is not just a fancy instruction. It is the operating logic for the conversation. It tells the model how to behave before the back-and-forth begins. Students who learn this early gain an unfair advantage, because they stop improvising each study session and start getting more consistent outcomes.

Here is a practical Day 1 version you can adapt:

Socratic Tutor System Prompt

You are my Socratic tutor. Your job is to help me understand concepts deeply rather than give me direct answers immediately.

- Ask one question at a time.

- Do not give the final answer until I attempt it first.

- Break difficult ideas into smaller steps.

- If I am wrong, explain why gently and clearly.

- Use examples suited to my level.

- After each explanation, ask me to restate the idea in my own words.

- If I ask for the answer too quickly, guide me back with a hint.

- At the end, quiz me with 3 short questions and summarize what I still need to practice.

That alone is a meaningful upgrade. But the best prompts are not generic forever. They are contextual. Add the subject, your level, and your pain point.

For example:

“I am a 10th-grade student studying Newton’s laws. I get confused when distinguishing inertia, force, and acceleration. Teach me slowly using simple examples from daily life. Do not assume I understand the formulas yet.”

Notice what is happening here. You are no longer asking AI to perform intelligence for you. You are using AI to organize a learning interaction around your actual weakness.

There should also be a small integrity checklist attached to your Study OS. Use this before any serious study session:

Study OS Checklist

- Did I define the AI’s role clearly?

- Did I tell it not to give the final answer too early?

- Did I include my level, topic, and confusion point?

- Did I ask for questions, hints, and explanation instead of output?

- Did I plan to verify claims, formulas, or sources independently?

- Did I leave time to summarize the concept in my own words?

This kind of setup aligns with where education is heading. Schools and universities are increasingly moving toward models that value process, verification, and metacognitive awareness rather than blind output. In other words, the future may belong less to students who can produce a polished AI-assisted page and more to those who can explain how they thought through the problem.

Real-Life Scenario: Newton’s Laws Finally Make Sense

Imagine a student named Aarav in 10th-grade Physics.

He is not lazy. He is overwhelmed.

The chapter on Newton’s laws has turned into one of those subjects that feels easy when the teacher explains it on the board and impossible the moment he studies alone. He keeps mixing up force and motion. He memorizes definitions, but when the textbook changes the wording, his confidence disappears. When homework asks him to explain why passengers jerk forward when a bus stops suddenly, he freezes.

So he does what millions of students now do. He opens an AI tool and types: “Explain Newton’s laws.”

The answer comes back beautifully formatted. Clear headings. Perfect language. Even examples. But after reading it, he still cannot apply the idea to a new problem. It felt smart. It did not make him smart.

The next evening, he tries a different approach. He pastes in a Socratic tutor prompt. He tells the AI he is a 10th-grade student and that he gets confused when a moving object keeps moving unless a force changes it. He adds one rule: “Don’t tell me the answer first. Ask me questions.”

The AI begins simply: “What happens to a football after you kick it if nobody touches it again?” Aarav answers, “It slows down.” The AI asks, “Why do you think it slows down?” He says, “Because motion ends.” The AI gently pushes back: “Or does something in the real world act on it?” That leads to friction. Air resistance. Surface contact. Suddenly the idea of inertia is not a textbook sentence. It is something he can see.

Then the AI asks him to compare a book resting on a table with a cricket ball flying through the air. Different cases, same principle. It asks for examples from a bicycle, a school bus, and a shopping cart. Each time Aarav has to attempt an answer. Each time the AI corrects the concept without humiliating him.

By the time they reach Newton’s second law, he is no longer staring at formulas like they are random symbols. The AI asks, “If you push an empty cart and a loaded cart with the same force, which accelerates more?” Now he can reason it out. The formula comes later, after intuition. That order matters.

At the end of the session, the AI gives him three mini questions and asks him to explain each law in his own words. For the first time, he is not copying an essay. He is building a framework.

That is the power of a Study OS. Not magic. Not instant brilliance. Structured struggle with intelligent guidance.

The Risks and the Right Boundaries

It would be irresponsible to pretend this approach has no downside.

AI tutoring can absolutely improve learning, but only when students stay alert to the risks. The first danger is dependence. A student can become so used to guided prompting that independent problem-solving weakens. The second is accuracy. Even a well-prompted model can sound confident while being wrong. The third is compliance drift: a tutor prompt starts as a learning aid, then quietly becomes “just help me finish this assignment.”

Those are not minor concerns. They are central. In fact, much of the current higher-education debate revolves around exactly this tension: AI can personalize learning and reduce routine workload, but over-reliance can erode reasoning if students stop checking, questioning, and thinking for themselves.

Still, the answer is not panic. It is boundary design.

Pros: A strong Study OS gives confused students a low-judgment environment to ask “basic” questions. It supports repetition. It adapts explanations. It can turn a lonely, frustrating subject into an interactive learning loop. It is especially useful when students are embarrassed to admit what they do not understand in class.

Concerns: If the system is not designed well, students may confuse polished interaction with real mastery. They may skip source-checking, avoid difficult writing, or let the AI become their substitute thinker. That risk becomes sharper in subjects requiring evidence, interpretation, and original argument.

The healthy middle path looks like this:

- Use AI to understand concepts, not to impersonate your final thinking.

- Use AI to rehearse, question, and test yourself.

- Verify facts, formulas, and especially sources outside the model.

- Keep a line between tutoring and submission help.

- End every session with your own summary, not the AI’s summary alone.

This balance also fits the broader future-of-education question. AI is not leaving the classroom. The real issue is whether students will become more intellectually capable through guided augmentation or mentally passive through over-automation. That is not a technology question alone. It is a habit question.

And that is why Day 1 matters so much. Before you speed up learning, you must decide what kind of learner you want AI to help you become.

FAQ: Building an AI Study OS the Smart Way

1. What exactly makes a “Socratic tutor” prompt better than a normal prompt?

A normal prompt usually asks for output. A Socratic tutor prompt asks for a learning interaction. That difference changes the entire session. Instead of receiving a finished answer, you are pushed to think, attempt, revise, and explain. This mirrors what good teachers often do in person: they do not simply dump information on you; they help you discover where your understanding breaks down. That matters because deep learning usually comes from active retrieval, explanation, and correction. A Socratic prompt slows the process down in a productive way. It makes you participate. It also exposes weak spots that polished AI answers tend to hide. In practical terms, this means you retain more, understand more, and become less likely to panic when the exam asks the same concept in a slightly different form.

2. Isn’t this still a form of cheating if I use AI while studying?

Not automatically. The ethical line depends on what you are asking the system to do and what your school allows. Using AI to explain a difficult idea, generate practice questions, or guide you through a concept step by step is very different from asking it to complete an assignment you are supposed to write or solve independently. That distinction is becoming more important as educators rethink responsible AI use. The goal is to use the tool as support for learning, not as a disguise for missing learning. A good rule is this: if the AI is replacing your own intellectual work on graded output, you are in dangerous territory. If it is helping you understand, rehearse, and reflect before you do your own work, you are on much stronger ground. Responsible use is about preserving authorship, honesty, and thinking.

3. Can this method work for subjects other than science?

Absolutely. In fact, a Study OS becomes even more powerful when you adapt it by discipline. In history, the AI can ask you to compare causes, challenge weak interpretations, or test whether you can support a claim with evidence. In literature, it can push you to defend a theme or unpack why a character decision matters. In math, it can withhold the final step and ask you to explain your reasoning line by line. In language learning, it can quiz you, role-play conversations, or correct grammar without switching entirely into answer mode. The key is not the subject. The key is the structure. Define the role, behavior, boundaries, and reflection pattern. Once you understand that architecture, you can build different tutor modes for different classes without starting from scratch every time.

4. What should I do when the AI gives a wrong explanation confidently?

Treat that moment as part of your Study OS, not as an exception. AI models can sound authoritative even when they are mistaken, incomplete, or oversimplified. That is why verification needs to be built into the workflow. For factual subjects, compare key claims with your textbook, teacher notes, or trusted external references. For formula-based work, plug the steps back into the original problem. For research topics, do not rely on the model for source accuracy alone. Ask it to identify uncertainty, then verify independently. You can even add a line to your system prompt that says, “If you are unsure, say so clearly rather than guessing.” The mistake many students make is assuming a polished explanation equals a correct one. Your job is not to distrust everything, but to remain intellectually awake. A good Study OS helps you use AI as a guide, not an unquestioned authority.

5. How long should a study session with AI actually be?

Long enough to create understanding, short enough to avoid passivity. For most students, 20 to 40 minutes is a strong range for one focused concept session. The danger of very long AI chats is that they can create the illusion of work while your own engagement slowly drops. A better pattern is to use AI in short loops: identify one concept, run a Socratic session, write your own summary, solve one or two problems without help, then return only if needed. This keeps the session anchored in your cognition rather than the AI’s output stream. It also prevents endless chatting, which often feels productive but turns into mental outsourcing. You want the model to spark effort, not become a comfort blanket. The best learning rhythm alternates guided interaction with independent recall.

6. What is the simplest version of a Study OS I can start with today?

Keep it simple. Start with one reusable prompt, one subject at a time, and one end-of-session habit. Your prompt should define the AI as a tutor, ask it to use questions before answers, and tell it not to reveal the final solution immediately. Then add a reflection habit: after every session, write three sentences in your own words explaining what you learned, where you were confused, and what you still need to review. That alone is enough to separate intentional learning from random AI use. You do not need a fancy dashboard, expensive app stack, or elaborate template system on Day 1. You need consistency. Once that is working, then you can build different study modes, research workflows, and subject-specific prompts. But the first win is behavioral: stop opening AI like a vending machine. Start opening it like a tutor room.

Day 1 is not about mastering every AI tool. It is about refusing to let the chatbox define the relationship.

Build the operating system first. Then let the tools plug into it.

That is how students stay curious, ethical, and hard to replace in an AI-heavy world. It also aligns with the bigger educational shift already underway, where institutions are moving beyond simple adoption and toward the harder question of how AI can enhance learning without hollowing out thought.

For more on responsible learning behavior, see The Beginner’s Guide to AI Ethics: Why Responsible AI Matters in 2025. For prompt ideas that support faster studying without crossing the line, read 10 Secret ChatGPT & Gemini Workflows Students Are Using to Study 3x Faster Without Cheating. And for the bigger question hanging over classrooms and campuses, revisit Universities Embrace AI — Will Students Get Smarter or Stop Thinking?.

Pro tip: In Day 2 of this series, we move from the “Study OS” mindset to the “Research Revolution”—showing you how to find academic sources that AI won’t hallucinate. Day 2: The Research Revolution

About the Author

Girish Soni is the founder of TrendFlash and an independent AI strategist covering artificial intelligence policy, industry shifts, and real-world adoption trends. He writes in-depth analysis on how AI is transforming work, education, and digital society. His focus is on helping readers move beyond hype and understand the practical, long-term implications of AI technologies.