The AI Family Pact: How to Use AI as a “Socratic Tutor” Rather Than an “Answer Key”

Home • AI in Health & Education • About

Parental Anxiety in 2026: Why This Conversation Can’t Wait

In 2026, AI is no longer a “future” technology for your kids—it’s already woven into homework, search, and even their entertainment. Teens quietly ask chatbots for help with essays, maths steps, and sometimes even emotional advice, often more than parents realise.

Multiple surveys show the same split picture: most parents are comfortable when AI is used to look up facts or support schoolwork, but very few are okay with chatbots stepping into the role of emotional adviser or digital friend. At the same time, parents admit they feel under-prepared: they know AI will shape their child’s education and career but don’t yet feel confident explaining how to use it wisely.

The 2026 reality: AI isn’t optional for your child’s future—but letting it quietly become their “secret answer key” is not an option either.

On TrendFlash’s guide to AI in schools, you’ll see the same story: AI can be a brilliant tutor or a shortcut that slowly kills curiosity, depending entirely on how it is used.

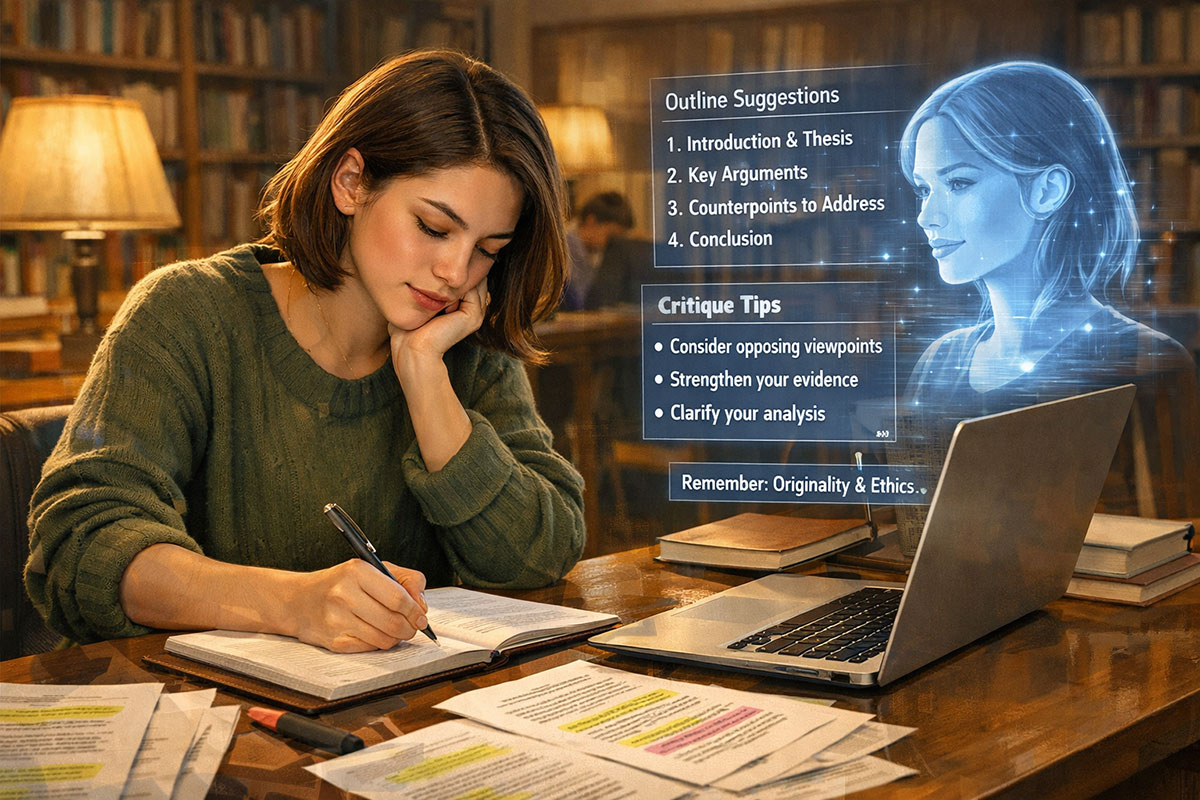

From Answer Key to Socratic Tutor

What goes wrong with “just give me the answer”

Most children’s first instinct with a powerful chatbot is simple: “Do this for me.” Prompts like “Write my essay on photosynthesis” or “Solve this math problem and show steps” turn AI into a high-speed answer key. It feels magical the first time—until it quietly becomes a crutch.

Teachers are already seeing this play out. Growing numbers of students admit they lean on AI for full answers, and a chunk of them are not sure where “smart help” ends and “cheating” begins. Parents, meanwhile, worry that constant shortcuts will blunt critical thinking and reduce their child’s ability to struggle productively with hard problems.

AI should feel like a thoughtful tutor sitting beside your child, not an invisible ghostwriter doing the assignment behind their back.

The “Socratic tutor” prompt (you can copy–paste this)

Here’s the small shift that changes everything: instead of asking the AI to produce work, ask it to ask questions and coach thinking.

Old style prompt:

“Write an essay on photosynthesis.”

New “Socratic tutor” prompt for a 10th-grade student:

“I am a 10th-grade student. Do not give me the full answer. Ask me step-by-step questions and mini-quizzes so that I can explain photosynthesis in my own words. Only give hints, not solutions. At the end, help me check and improve my explanation.”

Why this works so well:

- It locks in the student’s level: the AI tailors language and questions to 10th-grade understanding instead of university-level jargon.

- It forbids shortcuts: “no full answer” forces the AI into coach mode. Your child still has to think, recall, and explain.

- It ends with reflection: the final “check and improve” step helps students compare what they wrote with class notes or textbooks, reinforcing real learning.

You can adapt this pattern for any level: “I’m in Class 8 in India…”, “I’m a first-year engineering student…”, or “I’m preparing for my CBSE board exams…”. And you can tie it into other TrendFlash explainers like the 2025 AI learning stack to explore more tools that support this style of learning.

The 3-Step AI Family Pact (Fridge-Ready Rules)

A “Family Pact” turns fuzzy AI ethics into three simple rules everyone in the house understands. Think of it as your AI seatbelt policy.

Rule 1: Citation First

Rule: If the AI can’t show where it got its information, it can’t be used in homework.

Modern research assistants like Perplexity and some education-focused tools now provide clickable citations and original sources. That makes it easier for your child to cross-check facts in textbooks, trusted news, or scientific articles instead of blindly trusting the bot.

How to make this rule real at home:

- Prefer tools that show sources or a “References” panel.

- Ask your child to copy at least two non-AI sources into their notes for every AI-assisted answer.

- Ban the phrase “my source is ChatGPT” in final assignments; AI is a tool, not a source.

Family mantra: “If the AI can’t show its work, we don’t use its work.”

Rule 2: The Personal-Data Shield

Rule: No real names, school locations, or private family photos ever go into a public AI chatbot.

Child-safety groups are blunt about this: many parents underestimate how much personal data can be inferred from casual chats, uploads, and images. Even well-meaning tools may collect usage data to improve their models. For kids, that creates extra risk.

Turn this into a checklist:

- No full names of children, parents, teachers, or schools in prompts.

- No addresses, bus routes, or live location details.

- No uploading of family photos, report cards, or medical documents to general-purpose chatbots.

- Use anonymised descriptions: “a student in Class 9 at a Delhi school” is fine; the exact school name is not.

This rule links naturally to broader conversations on digital safety and AI governance covered in pieces like AI global governance challenges in 2025 and why responsible AI matters more than ever.

Rule 3: The Human Edit

Rule: AI-generated text must be “humanised” before it leaves the house—rewritten in the student’s own voice with their examples and reflections.

Studies show that when AI is used as a brainstorming and drafting aid, students can actually improve higher-order thinking—if they stay actively involved in editing and sense-making. Problems arise when students copy-paste outputs word-for-word, losing their voice and sometimes their grasp of the material.

Make the Human Edit a habit:

- Highlight any sentence taken from AI and rewrite it in the student’s natural voice.

- Add at least one example from class, local news, or personal life to every major paragraph.

- Read the final piece out loud; if it doesn’t “sound like you”, change it.

AI can help your child think more clearly—but only if their own thoughts are still the loudest voice on the page.

Safe AI Tools vs Red-Flag Tools for Students

Not all AI tools are created for learning. Some are built as research assistants or tutors; others are generic chat apps, or even marketed as “homework done for you”. The category matters more than the brand name.

At-a-glance comparison

| Type | Example tools | Why they’re safer or riskier | How to use them in your Family Pact |

|---|---|---|---|

| Research copilots | Perplexity, NotebookLM (for uploaded docs) | Provide answers with citations or work only from your child’s own notes, reducing hallucinations and making verification easy. | Use for fact-finding, building reading lists, and quickly understanding complex sources. Always click through to check the original pages. |

| Purpose-built tutors | Khanmigo, school-linked tools | Designed for learning, not copying; they nudge students with questions, hints, and step-by-step explanations instead of full solutions. | Use with the “Socratic tutor” mindset—tell your child to ask for questions and practice problems, not finished essays. |

| Notebook-style organisers | NotebookLM, Notion AI (private space) | Help students organise and summarise textbooks, PDFs, and lecture notes they already have, instead of pulling random web content. | Use to turn class notes into summaries, flashcards, or possible exam questions—then quiz your child using those questions. |

| Unfiltered generic chat apps | Open chatbots inside social apps, anonymous “AI friend” bots | Often used late at night for venting or emotional advice, with fewer safeguards. Can create unhealthy attachment or expose kids to adult topics. | Keep off-limits for kids. If used at all by teens, it should be with clear rules and regular check-ins. |

| Copy-paste homework bots | Sites promising “instant essay” or “assignment solver” | Market themselves around shortcuts, rarely show sources, and encourage plagiarism—exactly what many universities and boards are now cracking down on. | Treat as red flags. Block these on home Wi‑Fi and talk openly about why they damage learning and trust. |

For deeper lists of student tools and workflows, you can cross-link this guide with TrendFlash resources like top AI tools students are using (and how to use them ethically) and secret AI study workflows without cheating.

How to Build Your Own AI Family Pact

Step 1: Name the anxiety out loud

Start by saying the quiet part together: “We know AI is going to be a big part of your future. We’re still learning. Let’s figure out how to use it well as a family.” Many parents report feeling unprepared and even intimidated by AI, but conversations work best when you admit that instead of pretending to have all the answers.

Gentle starter questions:

- “What AI tools are you and your friends actually using right now—for homework and for fun?”

- “When does AI feel like ‘helping you learn’ and when does it feel like ‘doing it for you’?”

- “Are there things you’d never ask AI about? Why?”

Goal of this step: Curiosity first, rules second. You’ll make better rules when you understand how your child already sees AI.

Step 2: Co-design the rules (don’t just announce them)

Co-creating the AI Family Pact makes it far more likely to stick. Research on AI literacy and digital-safety programs shows that children engage more when they help set the norms instead of simply being handed a list of don’ts.

Try this mini-workshop at home:

- On one sheet, write “Good AI Use” and list examples together (brainstorming, explaining steps, generating practice questions, summarising long documents).

- On another sheet, write “Not OK AI Use” (copying entire essays, emotional venting to bots, sharing personal data, bypassing school rules).

- Turn your three rules—Citation First, Personal-Data Shield, Human Edit—into your own language and sign them like a small contract.

You can support this with external context from pieces like AI literacy for all, which shows how schools and governments are starting to frame similar principles.

Step 3: Sync home rules with school rules

Most schools now have (or are writing) AI policies. Some allow AI for brainstorming but not for final drafts; others restrict specific tools but recommend approved ones. Yet surveys show many students and parents still don’t clearly understand what’s allowed.

Make sure your Family Pact doesn’t live in a bubble:

- Read your child’s school or university AI policy together and highlight overlaps with your three rules.

- Encourage your child to ask teachers: “Can I use AI to brainstorm?”, “Is it OK if I use a tool like Perplexity to find sources?”

- If your child has learning differences, talk to the school about where AI support is encouraged (e.g., reading support, note summaries) and where it’s not.

This alignment mirrors bigger conversations from events like the India AI Impact Summit 2026, where leaders stress that AI literacy has to move from policy documents into everyday classrooms and homes.

Why This Matters Now (and Why So Many Parents Are Searching for It)

Search interest in phrases like “AI for parents”, “AI tools for students”, and “is AI cheating” has spiked as generative AI became mainstream in 2024–2026. Surveys show that nearly all students now use AI in some form for school, but parents still feel a big knowledge gap.

Unlike product-launch news or one-off scandals, an AI Family Pact guide has evergreen value. Parents will come back to it at the start of each school year, before exam seasons, and whenever a new chatbot launches. It naturally connects to other TrendFlash coverage on AI in schools, responsible AI, and multi-agent systems, strengthening your internal linking across categories like AI Ethics & Governance and AI in Health & Education.

Most importantly for families, this approach replaces fear with a plan. Instead of arguing about whether AI is good or bad, you’re asking better questions: How do we make it a Socratic tutor, not an answer key? What rules keep our child safe but still future-ready?

Where to Go Next on TrendFlash

If this guide helped, you can deepen your family’s AI roadmap with these related pieces:

- AI in Schools 2025: Parents’ Complete Guide – The Good, the Bad, and What to Do

- Top AI Tools Students Are Using – and How to Use Them Ethically

- AI Literacy for All: New Initiatives Teaching Students to Use and Critique AI

- The Future of Exams: How AI Is Redefining Student Assessments

And if you’re curious about how all of this connects to the bigger picture—agentic AI, multi-agent ecosystems, and the Delhi AI moment—pair this family-focused guide with macro stories like The Agentic Era Begins and India’s AI Impact Summit 2026.

Contact • Privacy Policy • Terms • Disclaimer

About the Author

Girish Soni is the founder of TrendFlash and an independent AI strategist covering artificial intelligence policy, industry shifts, and real-world adoption trends. He writes in-depth analysis on how AI is transforming work, education, and digital society. His focus is on helping readers move beyond hype and understand the practical, long-term implications of AI technologies.